Just build the magic

CEO: “Build me an AI Agent”

Product Leader: “What should it do?”

CEO: “It should able to fulfill my requests using our private data and analysis to make our teams move faster”

I’ve seen a lot of AI assistant and agent initiatives begin this way - with the desire for modern AI magic without a lot of guidance. While this is generally a terrible starting point, it doesn’t mean you can’t take the intent and work towards definition up front.

I wanted to take the time to share with other product and engineering folks out there a workflow synthesis technique I’ve used to help go from magic to executable specifications. Most of this applies equally to AI Assistants as it does AI agents - and I would note that the two are not mutually exclusive as OpenAI Operator has shown recently.

Just to quickly define what I mean by each:

AI Assistant: A usually chat based interface that can be independent or embedded that takes unstructured requests from a user and leverages AI to provide a response. The engine for the response can be directly an LLM or it can be Agentic.

AI Agent: An AI response engine that leverages a decision making node (usually LLM based, but can leverage other tech) and a set of tools to work iteratively towards a response for an input.

Starting to build without clear outcomes can result in:

Never ending development without a clear focus

Mixed opinions on whether the agent implementation was successful.

Define The Expected Benefit & Stakeholders

Many organizations have gotten a past the extremely vague starting point above and are starting more with a functionally focused agent request like “help take the burden off our sales team”, or “help automate monthly services reporting”. I think it’s important to go one level deeper.

“help take the burden off our sales team” → Decrease time spend on sales data entry and ticket filing from 1000 to 100 hours.

“help automate monthly services reporting” → Fully automate monthly 3 page summary reporting that takes 80 hours to produce currently. Enable on demand intra-month compilation.

This extra detail helps drive alignment on quantifying the benefit, setting focus on cost, enablement or output latency improvement.

Workflow Documentation & Synthesis

I’ve spend a good amount of time automating and productizing previously human driven workflows. A lot of the same principles and process apply to creating successful AI assistants and agents.

Map Current Workflows:

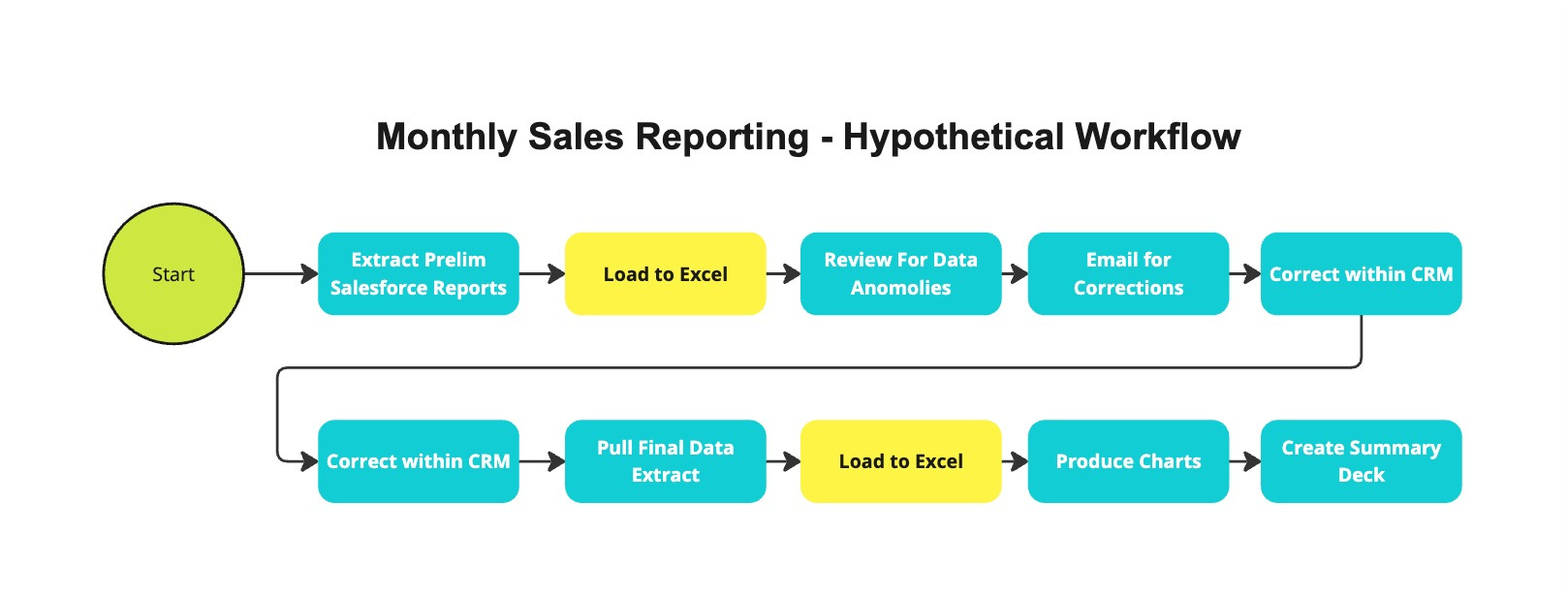

As an example, lets look at a hypothetical end of month sales reporting process:

Looking at the process you can identify a few areas that you may want to replace in a more automated process (like the use of Excel as a database) and potentially flag some areas that may need user interviews for more specific details (such as data anomaly review). You can also take that workflow and start to define tools - I find it’s always best to both design and implement at a granular level as you can wrap multiple tools into a workflow fairly trivially and then decide which level to expose to the agent.

Priority Areas:

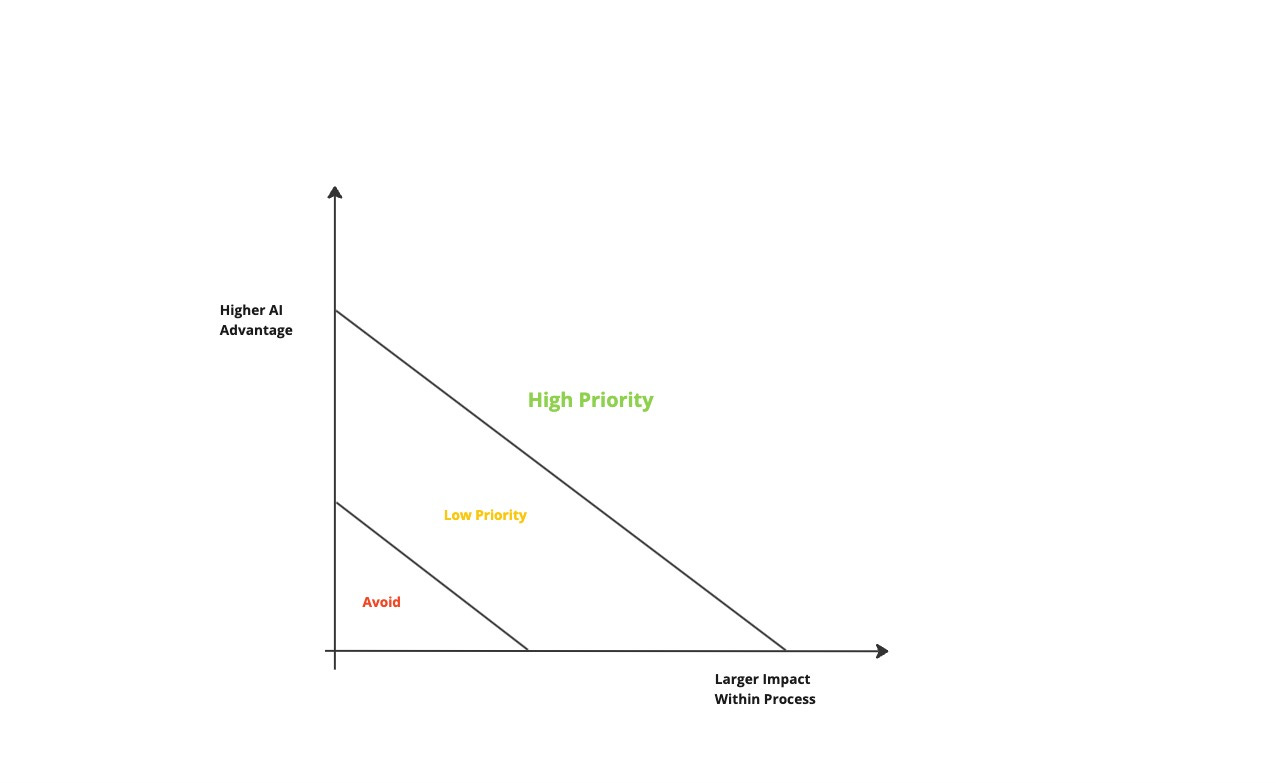

When looking at workflow components, it’s important to remember that you don’t need to do everything as there is room for iterative human-agent interaction. Priority should be given to the areas where AI outperforms humans AND is impactful to your outcome.

Extending to Multiple Workflows:

This method is easily extensible to multiple workflows as you can repeat the process with multiple similar flows and eliminate duplicate tools and identify areas for generalization (does the data extractor need to fetch data from more than CRM?).

Diverging from human process:

The main point of documenting existing process is to understand. This does not mean that AI agents MUST follow the same process. One you have a well documented set of workflows, you should evaluate which parts don’t make sense to mimic with AI (like the excel based analysis in this example)

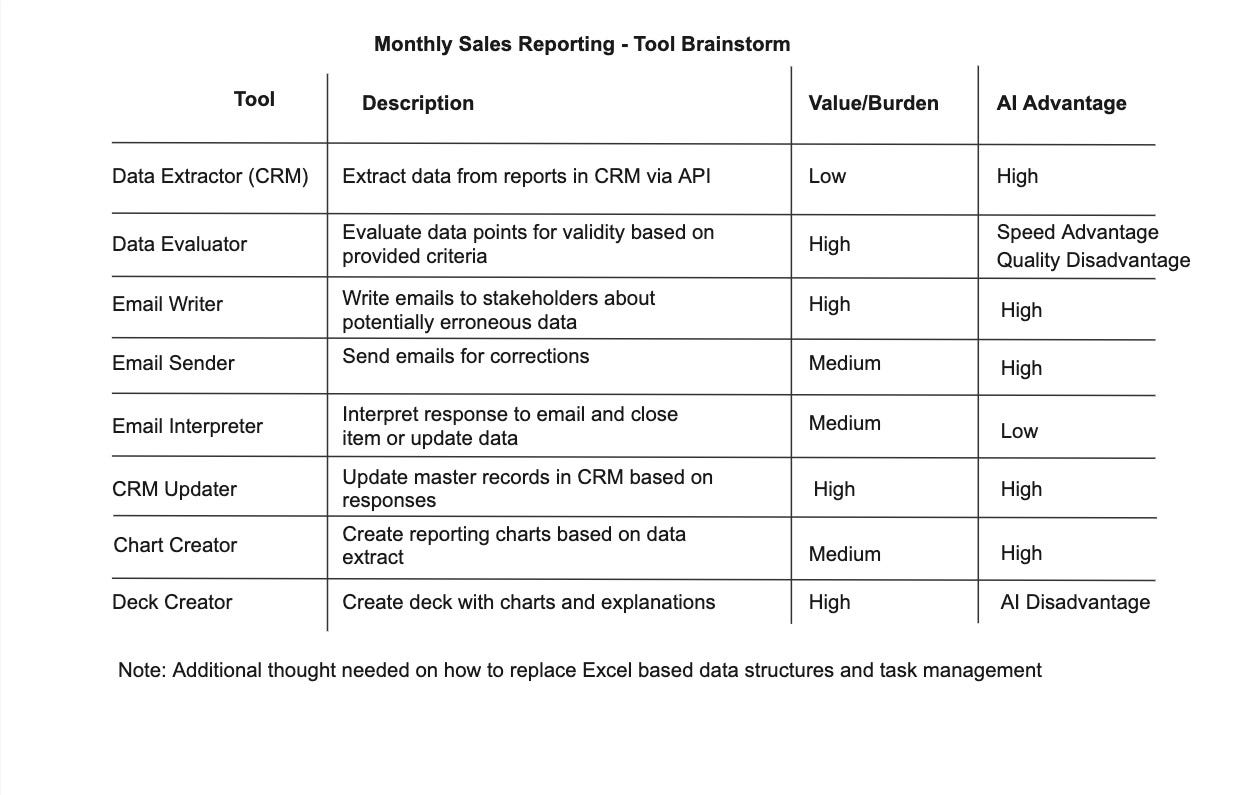

Mapping to Agentic Components

With the workflow(s) you can start to identify the tools that you might need and evaluate how impactful they will be based on improvement value, burden and advantage of AI capabilities relative to humans.

For this example - this would be a good point to go through with the engineering team and talk through options for managing tasks (like email sends and data validations) as well as managing data from external systems in process. This will likely expand the set of tools to include intermediary capabilities like Task Manager.

Tools vs Workflows:

In technical terms workflows can also be tools - but generally people think of granular components as tools. There isn’t one right answer here on how complex an operation should be at the tool level but I think there are clear tradeoffs.

Creating multi-step processes as tools minimizes the opportunity for an LLM brain to pick the wrong next step.

The more tools you create (and creating many workflows vs fewer component tools can drive many tools) the more likely you are to fail to select the right tool.

What this means is that in general the higher the breadth of use of your agent, the more likely you’ll want granular tools to avoid tool confusion. The more narrow the focus of your agent the more likely you’ll want complex workflows.

Define Output and Intermediary Benchmarks

When it comes to defining expectations around AI agents - earlier is better in my opinion. This helps both set tactical requirements for engineering as well as manage stakeholders who can expect everything from a system that works perfectly, to a output thats riddled with hallucinations.

In setting metrics, I take an approach analogous to SaaS application development in that you want success metrics both at the component level and at the system level. For a SaaS app you may look at user outcome metrics as well as component latency or error rate. This is important because it gives you traceability for errors and improvement. This concept is 10x MORE important for AI agents because of the wider possibility in failure vs traditional application where service level failures are less abstract.

For the overall system - you should have metrics OR milestone that speak to the ROI and are usually a reduction in effort or an expansion in capabilities/frequency.

Overall System:

Time Spent Compiling Reports

Enable mid-month reporting

# Errors found in final report

Processing Costs

Example Components:

Data Evaluator

Accuracy relative to human (in test set)

Recall

CRM Updater

Hard Failure Rate - Change Not Applied

Soft Failure Rate - Update Incorrect

Iterative Improvement

Don’t forget not to boil the ocean. In this example you can easily release an initial version of the agent that just does data extraction and compilation for review, then extend to email outreach and final report compilation in multiple iterations.